- Error in taply how to#

- Error in taply full#

- Error in taply software#

- Error in taply code#

- Error in taply mac#

Recall that the lapply() function has two arguments:Ī list, or an object that can be coerced to a list.Ī function to be applied to each element of the listįinally, recall that lapply() always returns a list whose length is equal to the length of the input list. The basic mode of an embarrassingly parallel operation can be seen with the lapply() function, which we have reviewed in a previous chapter. Said, the functions in the parallel package seem two work The help page for mclapply(), bad things can happen. This chapter with graphical user interfaces (GUIs) because, to summarize In general, it is NOT a good idea to use the functions described in In some cases, you may need to build R from the sources in order to link it with the optimized BLAS library.

Error in taply how to#

The ATLAS library is hence a generic package that can be built on a wider array of CPUs.ĭetailed instructions on how to use R with optimized BLAS libraries can be found in the R Installation and Administration manual.

Error in taply code#

As part of the build process, the library extracts detailed CPU information and optimizes the code as it goes along.

Error in taply software#

The Automatically Tuned Linear Algebra Software (ATLAS) library is a special “adaptive” software package that is designed to be compiled on the computer where it will be used.

Error in taply mac#

The Accelerate framework on the Mac contains an optimized BLAS built by Apple. The Intel Math Kernel is an analogous optimized library for Intel-based chips The library is closed-source and is maintained/released by AMD.

Error in taply full#

The AMD Core Math Library (ACML) is built for AMD chips and contains a full set of BLAS and LAPACK routines. Here’s a summary of some of the optimized BLAS libraries out there: In this case, I was using a Mac that was linked to Apple’s Accelerate framework which contains an optimized BLAS. Here, the key task, matrix inversion, was handled by the optimized BLAS and was computed in parallel so that the elapsed time was less than the user or CPU time. Here, you can see that the user time is just under 1 second while the elapsed time is about half that.

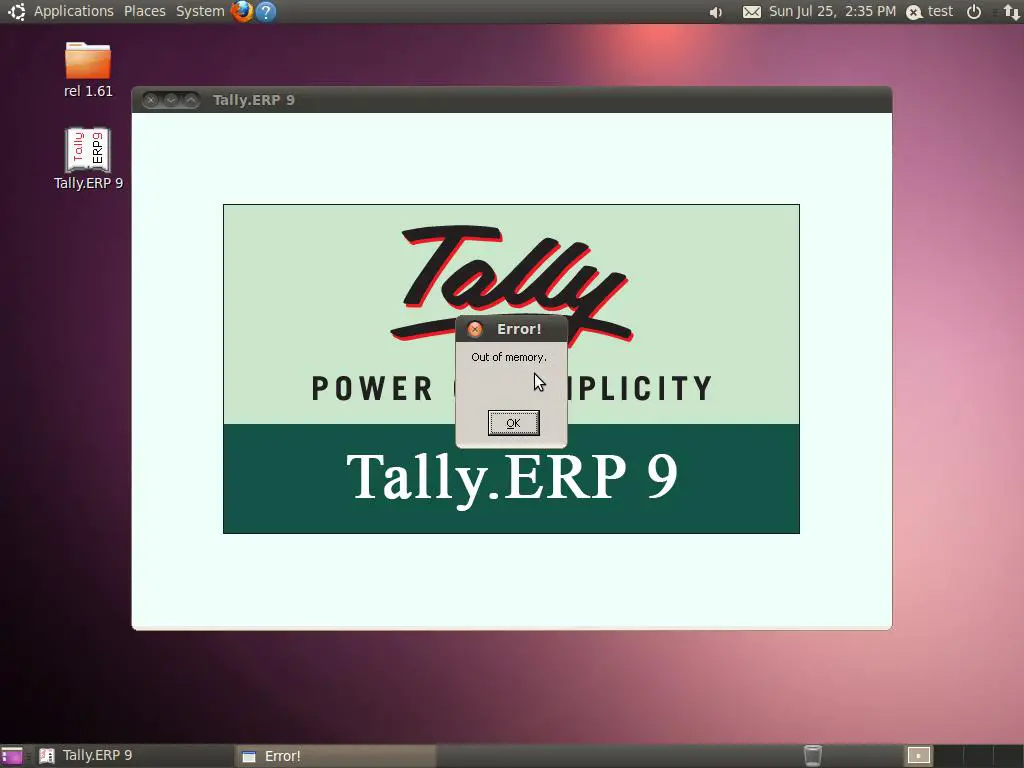

> system.time(b <- solve( crossprod(X), crossprod(X, y))) user system elapsed 0.854 0.002 0.448 It is possible to do more traditional parallel computing via the network-of-workstations style of computing, but we will not discuss that here. In particular, we will focus on functions that can be used on multi-core computers, which these days is almost all computers. In this chapter, we will discuss some of the basic funtionality in R for executing parallel computations. Getting access to a “cluster” of CPUs, in this case all built into the same computer, is much easier than it used to be and this has opened the door to parallel computing for a wide range of people. Even Apple’s iPhone 6S comes with a dual-core CPU as part of its A9 system-on-a-chip. These days though, almost all computers contain multiple processors or cores on them. Parallel computing in that setting was a highly tuned, and carefully customized operation and not something you could just saunter into. In those kinds of settings, it was important to have sophisticated software to manage the communication of data between different computers in the cluster. It used to be that parallel computation was squarely in the domain of “high-performance computing”, where expensive machines were linked together via high-speed networking to create large clusters of computers. Such a speed-up is generally not possible because of overhead and various barriers to splitting up a problem into \(n\) pieces, but it is often possible to come close in simple problems. The basic idea is that if you can execute a computation in \(X\) seconds on a single processor, then you should be able to execute it in \(X/n\) seconds on \(n\) processors. Generally, parallel computation is the simultaneous execution of different pieces of a larger computation across multiple computing processors or cores. Many computations in R can be made faster by the use of parallel computation. 22.4 Example: Bootstrapping a Statistic.21.3.2 Changes in PM levels at an individual monitor.21.2 Loading and Processing the Raw Data.21 Data Analysis Case Study: Changes in Fine Particle Air Pollution in the U.S.